Digital Drug Dealing Comes to Berkeley

December 13, 2011

Wow! Berkeley is going to provide all students with “free” copies of Microsoft software for the next couple of years.

Berkeley Vice Chancellor for IT and CIO Shelton Waggoner emailed all Berkeley students late on Tuesday night to announce that the project for Operational Excellence (OE or Bain & Company consulting, for short) would be distributing Windows and Office to us all as part of the “OE Productivity Suite” (or OEPS – pronounced “whoops”).

Here’s the email (fresh from my inbox less than 30 minutes ago):

We are pleased to announce that the campus has signed a license agreement to provide Microsoft Office and Operating System software to all students at no cost this year and next. Students will be able to download one copy of the following products and may keep the software perpetually upon graduation.

Office Professional Plus or Office for Mac Home & Business (one or the other, not both)

Microsoft Windows Desktop Operating System (OS) upgrades including Windows 7 Enterprise

The software will be available for download beginning Monday, January 9, 2012. Check the Student Technology Council`s (STC) website, http://stc.berkeley.edu, in January for download information.

This agreement is part of the Operational Excellence-sponsored Productivity Suite (PS) project. The goal of this project is to reduce complexity and costs and, at the same time, distribute licenses for the most commonly used software and tools so that everyone can work with the most current version. The Adobe agreement reached at the start of the fall semester is also part of this project.

Have not downloaded Adobe yet? Go to the STC`s “Downloads“ page, http://stc.berkeley.edu/downloads.htm. Links to help with troubleshooting are on the same page. Watch for information about spring semester Adobe training and a T-shirt design contest using Adobe products.

During this academic year, ASUC President Vishalli Loomba and Graduate Assembly President Bahar Navab are partnering with the STC on assessing and advising the PS project; first, to support the adoption and use of these popular software products, and second, to gauge interest and usage for such a program over the longer term. Depending upon student feedback and students` continued level of interest, alternatives for cost recovery for student downloads will be explored. More information about these efforts can be found on the STC`s “Downloads“ page, http://stc.berkeley.edu/downloads.htm.

If you have questions, please do not hesitate to contact Vishalli, Bahar, or the STC (student.tech@berkeley.edu).

Vishalli Loomba

President, ASUC

president@asuc.org

Bahar Navab

President, Graduate Assembly

president@ga.berkeley.edu

Shel Waggener

Associate Vice Chancellor-IT and CIO

John Wilton

Vice Chancellor

Administration & Finance

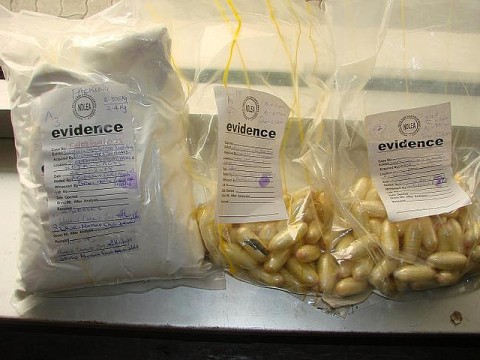

As a good friend once put it, this is DIGITAL DRUG DEALING.We’re being given “free” copies of Office & Windows now so that we don’t consider alternatives later. We’re being locked in. IT’S A TRAP.

Incredible as it might sound, Berkeley can do better – in fact, without negotiating at all, the CIO could distribute operating systems and office productivity software free to all of us for life!

I’ll have more to say about this soon…but for now, venom.

Peer review without the peers?

December 11, 2011

Academic peer review tends to be slow, imprecise, labor-intensive, and opaque.

A number of intriguing reform proposals and alternative models exist and hopefully, some of these ideas will lead to improvements. However, whether they do or not, I suspect that some form of peer review will continue to exist (at least for the duration of my career) and that many reviewers (myself included) will continue to find the process of doing the reviews to be time-consuming and something of a hassle.

The most radical solution is to shred the whole process T-Rex style.

This is sort of what has already happened in disciplines that use arXiv or similar open repositories where working papers can be posted and made available for immediate critique and citation. Such systems have their pros and cons too, but if nothing else they decrease the amount of time, money and labor that go into reviewing for journals and conferences, while increasing the transparency. As a result, they provide at least a useful complement to existing systems.

Over a conversation at CrowdConf in November, some colleagues and I came up with a related, but slightly less radical proposal: maybe you could keep some form of academic peer review, but do it without the academic peers?

Such a proposition calls into question one of the core assumptions underlying the whole process – that reviewers’ years of training and experience (and credentials!) have endowed them with special powers to distinguish intellectual wheat from chaff.

Presumably, nobody would claim that the experts make the right judgment 100% of the time, but everybody who believes in peer review agrees (at least implicitly) that they probably do better than non-experts would (at least most of the time).

And yet, I can’t think of anybody who’s ever tested this assumption in a direct way. Indeed, in all the proposals for reform I’ve ever heard, “the peers” have remained the one untouchable, un-removable piece of the equation.

That’s what got us thinking: what if you could reproduce academic peer review without any expertise, experience or credentials? What if all it took were a reasonably well-designed system for aggregating and parsing evaluations from non-experts?

The way to test the idea would be to try to replicate the outcomes of some existing review process using non-expert reviewers. In an ideal world, you would take a set of papers that had been submitted for review and had received a range of scores along some continuous scale (say, 1 to a protocol to distribute the review process across a pool of 5 – like papers reviewed for ACM Conferences). Then you would develop non-expert reviewers (say, using CrowdFlower or some similar crowdsourcing platform). Once you had review scores from the non-experts, you could aggregate them in some way and/or compare them directly against the ratings from the experts.

Would it work? That depends on what you would consider success. I’m not totally confident that distributed peer review would improve existing systems in terms of precision (selecting better papers), but it might not make the precision of existing peer review systems any worse and could potentially increase the speed. If it worked at all along any of these dimensions, implementing it would definitely reduce the burden on reviewers. In my mind, that possibility – together with the fact that it would be interesting to compare the judgments of us professional experts against a bunch of amateurs – more than justifies the experiment.

A few things I learned this week

December 4, 2011

The format for this week’s post, which I intend to repeat as an occasional series, was inspired by the inimitable and brilliant Pete Warden’s “Five Short Links.”

New York City has at least one ghost zipcode – The 10048 postal code used to belong to the World Trade Center. Mail continues to be sent there.

The Superbowl MVP is going to Disney(world|land)! – Mako informed me that Disney shoots two versions of it’s famous post-Super Bowl advertisements in order to use them in East and West coast markets. Turns out not only is that true, but sometimes the Super Bowl MVP even visits both theme parks in the ensuing off-season. The first such ad featured the New York Giants’ Quarterback Phil Simms in 1987.

Seattle Seahawks running back Marshawn Lynch really (really!) likes skittles – In other news, his homepage is awesome and features a familiar skyline.

Even anti-piracy advocates are pirates – Even more awesome is the fact that when a board member of the Dutch anti-piracy group BREIN offered to help the artist Melchior Rietveldt receive royalties for his work, he asked for a 33% cut (and was recorded doing so).

A “Simple Explanation” of Benford’s Law may exist – I really like Benford’s Law, but am ashamed to say I still can’t really explain why it works. I’m hoping this short essay (PDF) by Rachel Fewster will change that. Fewster claims that the explanation is “simple and intuitive” for “anyone with a basic knowledge of probability density curves and logarithms.” Hopefully, I can meet those criteria (H/T Pete Warden).

(if nothing else, Iron Blogger has re-taught me the importance of the occasional filler post)

subscribe to my feed

subscribe to my feed